I want to be upfront about something before you read another word: I’m writing this for me as much as for you.

In six months, my AI setup will look nothing like it does today. New tools will have emerged. Things I swear by right now will have been replaced by something better. Things I haven’t figured out yet will have clicked. And I’ll want a record of where I was when I started.

So consider this a dispatch from the field, not a manifesto. There will be more versions.

I’ve seen this movie before

In 1997, I was explaining to a room full of hoteliers how the internet was going to change their business. One of them looked at me and said, without a trace of irony: “The internet is just a fad.”

That’s the polite version of what he said.

I was starting to build BookDirect by then — one of the first online hotel reservation platforms globally. I wasn’t speculating about the internet’s impact on hotels. I was living it. And I still couldn’t get the room to see what I was seeing.

AI is not a fad. I won’t insult your intelligence by making that argument in 2026. But the gap between people who are genuinely integrating AI into how they work and people who are dabbling at the edges… is growing faster than most people realize. The dabblers feel like they’re keeping up because they’ve tried ChatGPT. They’re not keeping up.

I’m somewhere in the middle. Closer to the integrators than the dabblers, but honest enough to know I’ve barely scratched the surface. This is what the middle looks like.

The problem AI actually solved for me

I have ADHD.

Not the “I get distracted sometimes” version that everyone claims now. The real version, where your brain is simultaneously running twelve tabs, three of which are playing music, and you’ve forgotten what you opened the browser for in the first place.

I’ve come to think of ADHD as a superpower with a specific design flaw. The superpower: lateral thinking, pattern recognition, generating ideas at a pace that startles people in the room. The design flaw: it is genuinely terrible at finishing things. Not because you lose interest. Because the next idea captures the foreground and the previous project drops out of working memory… until six months later when you find it in a folder and think, “oh right, that was good.”

AI solved that problem for me.

Not all of it. But enough of it to matter.

When I’m in the middle of something and the thread starts to fray — when I can feel my attention drifting and the project starting to stall — I now have a collaborator who holds the thread. I can come back to a conversation that has context. I can say “where were we” and get a useful answer instead of staring at a half-finished document wondering what I was thinking when I wrote paragraph three.

AI’s most underrated capability isn’t generating content. It’s continuity.

What my setup actually looks like

Here’s what I’m actually using, as of this writing:

Claude is my primary AI. I work in a Project that holds context about my business, my positioning, my clients, my writing voice, and my ongoing work. Every conversation builds on what came before. This isn’t ChatGPT with extra steps — the Project architecture means I’m not re-briefing from scratch every session. Claude knows who I am, what I’m building, and how I think. That took time to set up. It was worth every minute.

Plaud Note Pro sits on the back of my phone, magnetically attached. I record conversations, meetings, peer group sessions — and it transcribes them. A disorganized two-hour peer group discussion becomes a structured presentation. A rambling client call becomes a clear set of action items. The recording doesn’t do that. AI processing the recording does. One thing I’ve learned: for calls tied to a specific project, I skip Plaud’s own summary and feed the raw transcript directly to Claude. The difference is context. Plaud summarizes what was said. Claude understands what it means — relative to the project, the players, and what we’re actually trying to accomplish.

SuperWhisper handles dictation. I think faster than I type. Always have. SuperWhisper lets me capture ideas the moment they happen — walking, driving, in between things — instead of losing them to the gap between thought and keyboard.

Craft is where I organize everything. With MCP integration, Claude can read and write directly to my Craft workspace. My time tracking, my project status board, my content library — all accessible from within a conversation. The data lives in one place and the intelligence lives in another, and they talk to each other.

Artyfacts is where substantive work product gets filed. Analysis, briefs, decision memos, research — anything that should outlive a single conversation gets saved there. It’s the difference between having a conversation and having a record. Claude saves directly to Artyfacts at the end of a working session. The filing cabinet and the intelligence are connected.

Blitzit runs my task layer. What needs to happen today, what’s overdue, what I’ve committed to — all of it lives there. Claude can read and write to it directly. When I ask “what should I actually focus on this morning,” the answer draws on real task data, not memory.

Stanley manages my X presence. I’m not going to pretend I’ve cracked X. But having a system that helps me stay consistent without the platform eating time I don’t have — that’s the job Stanley does.

ChatGPT handles image generation now. When OpenAI launched Images 2.0, it wasn’t a marginal improvement — it changed which tool I reach for first. I still run the same prompt through Grok as a comparison test. It consistently produces an inferior result. I don’t do this to be fair to Grok. I do it to calibrate my eye. Claude still writes the prompt with a precision that’s genuinely useful — I describe what I want, Claude builds the prompt, I paste it into ChatGPT. We iterate until we get exactly what I want. Most people don’t realize you can use one AI to get better work out of another.

The Council of AIs

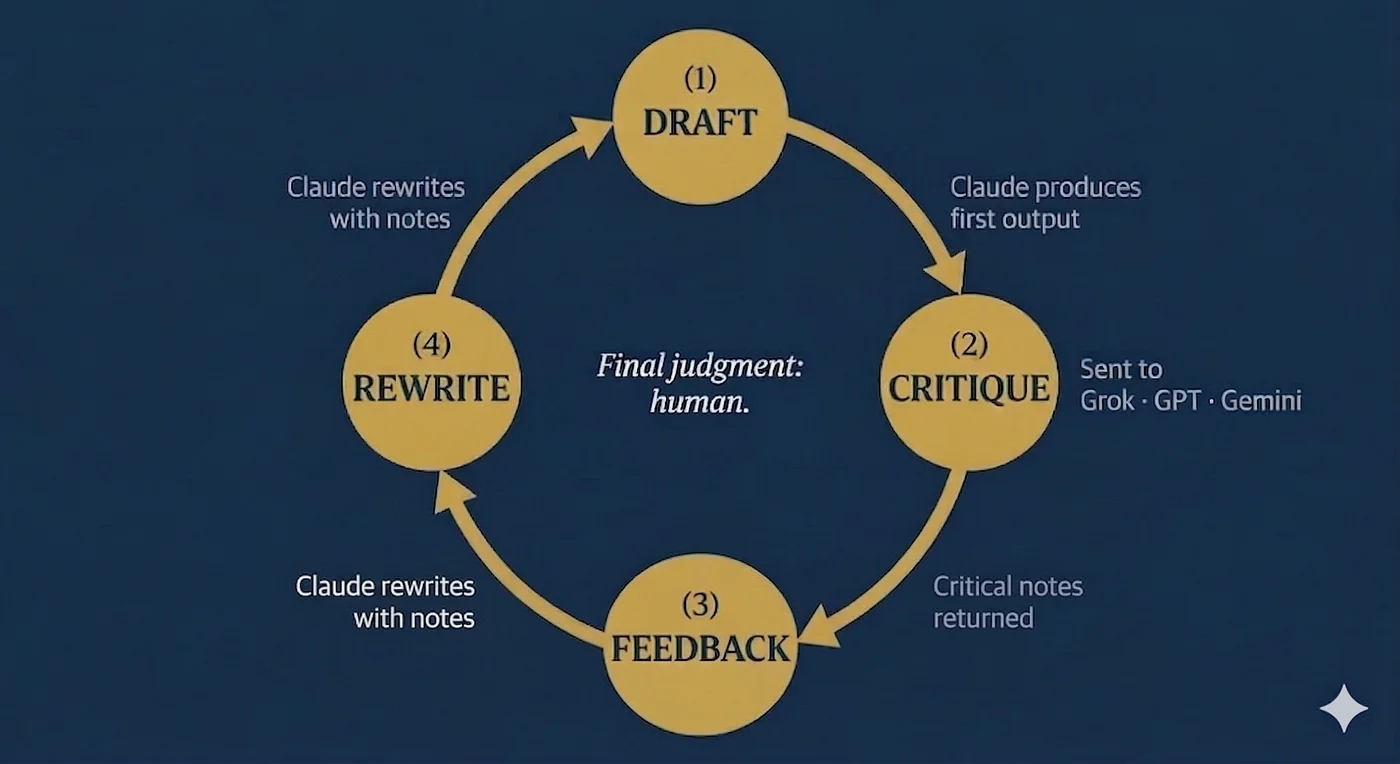

Here’s something I haven’t seen many people talk about: I play the AIs against each other.

I’ll produce something with Claude — an article draft, a piece of copy, a strategic recommendation. Then I’ll take that output to Grok, or OpenAI, or Gemini and ask it to critique it. What’s weak? What’s missing? What doesn’t land? Then I bring the feedback back to Claude and ask for a rewrite incorporating the notes.

I think of it as having a council of advisors who don’t know each other and haven’t coordinated their answers. Each AI has its own tendencies, its own blind spots, its own version of what “good” looks like. Running output through more than one of them surfaces things that any single one would miss — including the one I trust most.

The instinct came from how I’ve always worked. I’ve never wanted one advisor. I’ve wanted multiple perspectives from people with different vantage points, then synthesized the inputs myself. The AIs just gave me a way to do that at a pace and scale that wasn’t possible before.

The final judgment is still mine. That’s the point.

The thing that actually moved the needle

If I’m honest, this is what took the longest.

The quality of what you get back is almost entirely determined by the quality of what you put in. Vague prompts produce generic output. Specific, well-framed prompts produce specific, useful output. The difference isn’t luck — it’s craft.

The highest-leverage thing I’ve done is build a detailed voice profile for myself. Not a list of bullet points. A real document that captures how I think, write, and communicate — built through a structured interview process. I came across Ruben Hassid’s work on exactly this; he wrote a piece called I Am Just A Text File that reframed how I thought about AI context. The idea: if you could reduce yourself to a text file that any AI could read, what would it say? I took that question seriously. The result is a document that lives in my Claude Project and gets loaded at the start of every session.

Beyond the voice profile, I’ve built a customized context brief for each project — so Claude provides output and perspective specific to that engagement, not a generic version of me applied to everything. The more precisely you define the context, the more precisely you get back what you actually need.

The difference before and after that infrastructure is not subtle. The setup cost was real. It was also finite. Don’t skip it.

AI as operating system: the daily practice

I used to say to my hotel staff: “How can you tell if you’re winning if you don’t keep score?” I’ve adapted that into my daily workflow.

I start most working days with a structured check-in — a conversation with Claude that surfaces what’s on the board, what’s overdue, what I’ve committed to, and what I should actually focus on today given everything else in motion. At the end of the day, I sign off. What got done. What didn’t. Time logged against my projects. Notes on anything that shifted.

My project statuses, my time tracking, my content pipeline — I can ask for a weekly summary and get one that reflects what actually happened, not what I meant to do. I can see, at a glance, whether my actual effort matches my stated priorities. Usually the gap is instructive.

Here’s something most people overlook: because my context briefs and project documentation live in Craft — separately from the AI itself — everything I’ve built is fully transferable to any AI platform. My work, my voice, my project-specific frameworks are mine. I will never be locked into a single system. That portability wasn’t an accident. It was a design decision.

What I’ve learned so far

Stop asking it to think for you. Start asking it to think with you. The worst AI users I’ve observed treat it like a search engine with better grammar. Ask a question, get an answer, done. The real value is in the back-and-forth. Push back on what it gives you. Tell it what’s wrong. Ask it to argue the other side. The conversation is the product.

Your voice matters more than you think. Without the investment I described above, AI output sounds like everyone else’s AI output — which sounds like no one in particular. With it, the output sounds like you. That’s not a small distinction if your name is on it.

AI is not a replacement for judgment. It’s an accelerant for it. In the early days of computing there was a saying: “Garbage in, garbage out.” AI takes it to the next level. The machine is faster, smarter, and more capable than anything we’ve had before — which means the garbage comes out faster, more polished, and more convincingly formatted than ever. Everything AI produces requires a human with domain knowledge and real-world context to evaluate, edit, and own.

The tools change faster than you can master them. I’ve made peace with this. You cannot wait until you fully understand one tool before adopting the next. Pick the tools that solve your actual problems, get reasonably fluent with them, and stay curious.

What still doesn’t work (for me)

AI research requires verification. It will occasionally state something with the confidence of a person who has read every book ever written… and be wrong. Not often. But enough that I never publish anything with a specific fact I haven’t checked.

One of the biggest challenges I had running hotels was training front desk staff that it was okay to tell a guest “I don’t know” — as long as it was immediately followed by “I’ll find out and get back to you.” I’ve had to apply the same lesson to AI. I’ve explicitly instructed it to never guess, never assume, never fabricate — and to flag when it’s uncertain rather than fill the gap with something plausible-sounding. That single setting has materially improved the reliability of what I get back.

And it still has no taste. It can approximate taste based on what you tell it. But the final call on what’s good — what actually sounds right, what lands, what should be cut — is always yours. The day I forget that is the day my writing starts sounding like everyone else’s AI output.

The honest summary

I am more productive than I was a year ago. Not marginally. Substantially. The projects that used to stall are moving. The writing that used to take days takes hours. The ideas that used to live in my head and die there are getting captured, developed, and published.

AI didn’t give me better ideas. I had ideas — more than I’ve ever known what to do with. It gave me a system for doing something with them.

That’s the version of this story nobody is telling. Not “AI will replace you” and not “AI is just a tool.” It’s more specific than either of those: AI will give you a fighting chance against your own worst tendencies.

For me, that tendency is leaving things unfinished.

Not anymore.